Using computers to aid in the complex task of weather prediction is not all that new, at least in the timescale of modern technology. In fact, computers have been used to help forecast the weather even since the first electronic, general-purpose computer debuted. In 1950, using the ENIAC (Electronic Numerical Integrator And Computer) Drs. Charney, Von Newmann et al. ran a simple (by today's standards) set of mathematical equations to predict the air flow in the upper levels of the atmosphere. The success of their experiment would lead to the formation of the first branch of the U.S. Government dedicated to numerical weather prediction (aka using computers to help forecast the weather). Today the organization now known as NCEP, or the National Centers for Environmental Prediction, continues to ever-improve the accuracy and skill of numerical weather prediction.

ENIAC Forecast Ca. 1950 - Source: NCEP

Advancements in numerical weather prediction over the years have come from many different sources, the first of which is the improvement of the mathematical equations that are used by the computer. Expressing how the fluid atmosphere behaves in mathematical form is a task which is still being refined to this day. Equations must take into account how temperature, pressure, and humidity change in the real world, which is also complicated by the fact that the Earth spins about an axis. Next, equations need numbers (data) to be input into them to output some sort of answer (prediction). This is another area where advancements have been made. Satellites and even commercial aircraft are now being used to fill in the large gaps where measurements of the atmosphere used to be only sparsely taken by weather balloons. Surface weather stations and radar have proliferated since those early 1950's computer experiments and also serve important roles in providing data to be input into the computers.

Early TIROS Satellite Imagery - Source: NOAA

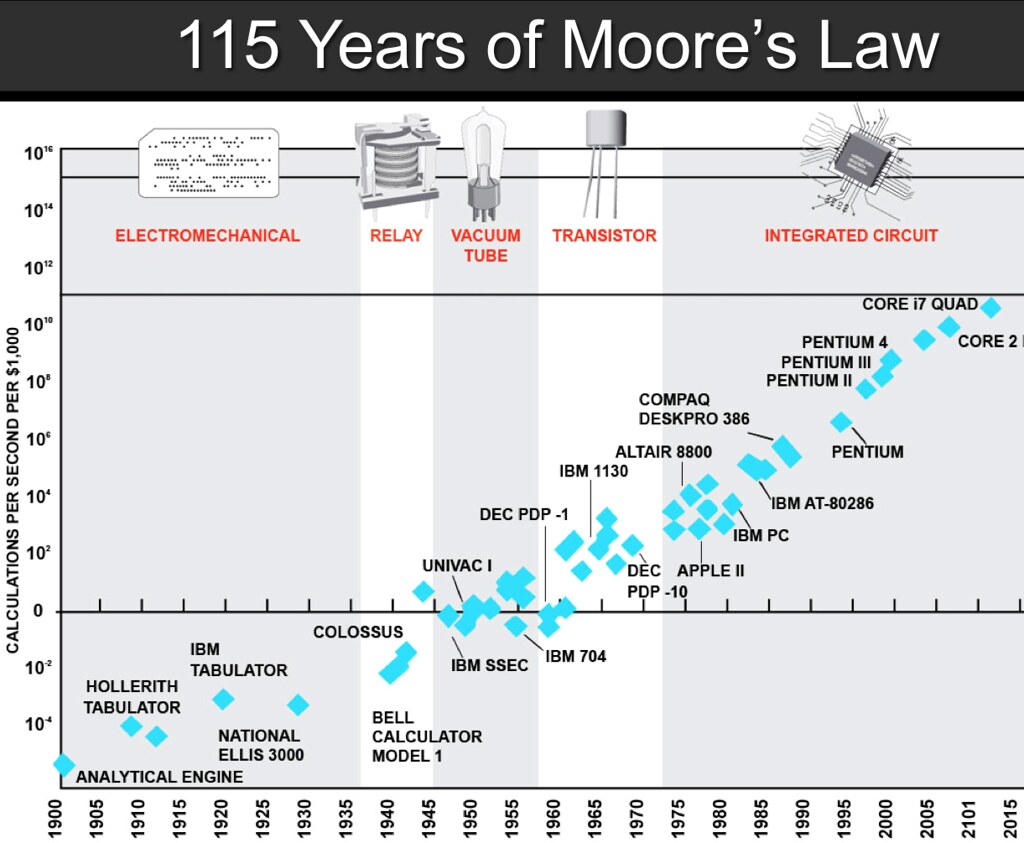

Finally, enormous increases in computing power since that first ENIAC forecasting experiment in 1950 now enable today's computers to run much more complex numerical weather models much more quickly. To put this increase of computer power into perspective, ENIAC with its over 17,000 vacuum tubes and which covered the same square footage as some homes, is now less powerful than even a simple pocket calculator. Moore's Law, first introduced in 1965, says that the number of transistors on computer chips doubles every two years. Even though ENIAC used vacuum tubes instead of transistors, its computing power can also be plotted on the exponential curve of Moore's Law; a curve which still holds true even with today's computational advancements. Expressed in terms of the number of operations per second, ENIAC could perform 500, while today's modern supercomputers can perform trillions even quadrillions of operations per second.

Moore's Law 1900-2015 - Source: Steve Jurvetson

Moore's Law 1900-2015 - Source: Steve Jurvetson

Recently, the National Weather Service (NWS) has added a pair of new supercomputers, nicknamed "Luna" and "Surge", to its stable of ever improving "weather crunching" computers. Both machines together can perform 5.78 petaflops (quadrillion operations per second), compared to the previous supercomputers used by the NWS which could only manage 776 teraflops (trillion operations per second). The higher processing power of these new supercomputers will enable NWS scientists to expand water quantity forecasts to better predict droughts and floods, as well improving hurricane track and intensity forecasts. Interested in tracking how fast the most powerful supercomputers in the world are currently? The site TOP500 comes out with an updated ranking twice a year.